Extraction transformation and load3/10/2024

Every API is designed differently, whether you are using apps from giants like Facebook or small software companies. With the increasing dependency on third-party apps for doing business, the extraction process must address several API challenges as well: Have there been any errors that have caused missing or corrupted data? How much computational power and memory is allocated? You need to monitor your extraction system on several levels: The variety of sources increases the demands for monitoring, orchestration and error fixes. Working with different data sources causes problems with overhead and management. This can become complex if you implement a mixed model of architectural design choices (which people often do in order to accommodate for different business cases of data use). Based on your choices of data latency, volume, source limits and data quality (validation), you need to orchestrate your extraction scripts to run at specified times or triggers. are some returned values nonsensical, such as a Facebook ad having -3 clicks?). are some fields empty, even though they should have returned data?) and corrupted data (e.g. When validating data at extraction, check for missing data (e.g. Either you validate data at extraction (before pushing it down the ETL pipeline), or at the transformation stage. Your data engineers need to work around these barriers to ensure system reliability. For example, some sources (such as APIs and webhooks) have imposed limitations on how much data you can extract simultaneously. You need to be aware of the source limitations when extracting data. With large amounts of data, you need to implement parallel extraction solutions, which are complex and difficult to maintain from an engineering perspective. The solutions for low-volume data do not scale well as data quantity increases. The volume of data extraction affects system design.

The tradeoff is between stale or late data at lower frequencies vs higher computational resources needed at higher frequencies. Depending on how fast you need data to make decisions, the extraction process can be run with lower or higher frequencies. Knowing them can help you prepare for and avoid the issues before they arise. The data extraction part of the ETL process poses several challenges. For example, data collection via webhooks.īelow are the pros and cons of each architecture design, so that you can better understand the trade-offs of each ETL process design choice: The source notifies the ETL system that data has changed, and the ETL pipeline is run to extract the changed data. every time the ETL pipeline is run), only the new data is collected from the source, along with any data that has changed since the last collection. At each new cycle of the extraction process (e.g. Each extraction collects all data from the source and pushes it down the data pipeline.

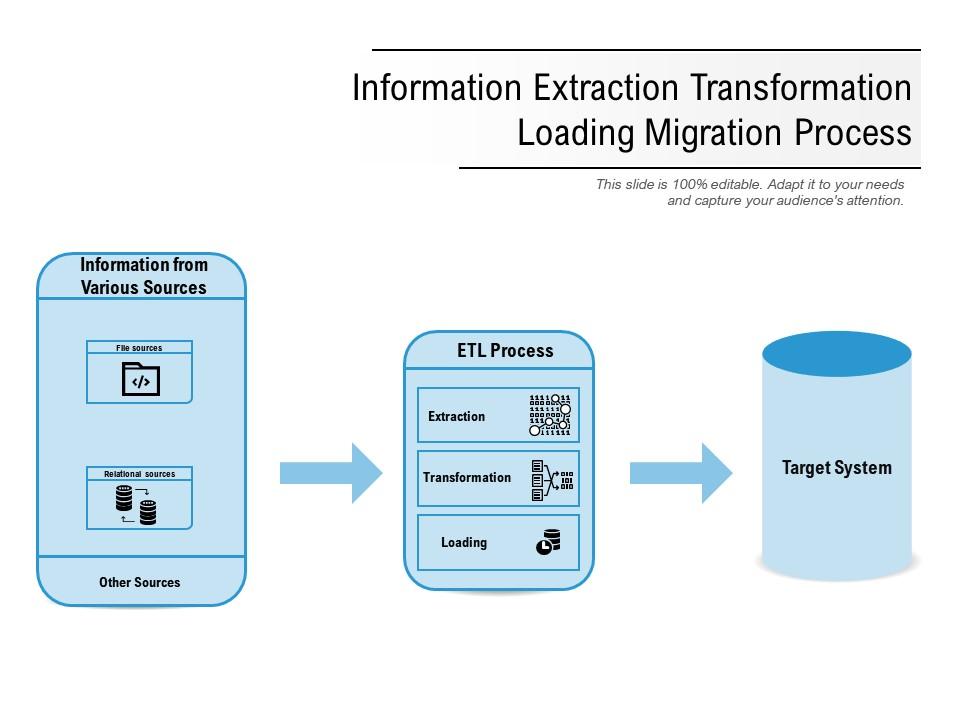

When designing the software architecture for extracting data, there are 3 possible approaches to implementing the core solution: Now let’s look at the three possible architecture designs for the extract process.

But today, data extraction is mostly about obtaining information from an app’s storage via APIs or webhooks. Facebook for advertising performance, Google Analytics for website utilization, Salesforce for sales activities, etc.Įxtracted data may come in several formats, such as relational databases, XML, JSON, and others, and from a wide range of data sources, including cloud, hybrid, and on-premise environments, CRM systems, data storage platforms, analytic tools, etc. With the increase in Software as a Service (SaaS) applications, the majority of businesses now find valuable information in the apps themselves, e.g. Traditionally, extraction meant getting data from Excel files and Relational Management Database Systems, as these were the primary sources of information for businesses (e.g. This data will ultimately lead to a consolidated single data repository. The “Extract” stage of the ETL process involves collecting structured and unstructured data from its data sources.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed